Tired of image benchmarks that don't show any images?

6 models evaluated on 192 prompts across 6 categories. Know which model is best — for your use case, your budget, your quality bar.

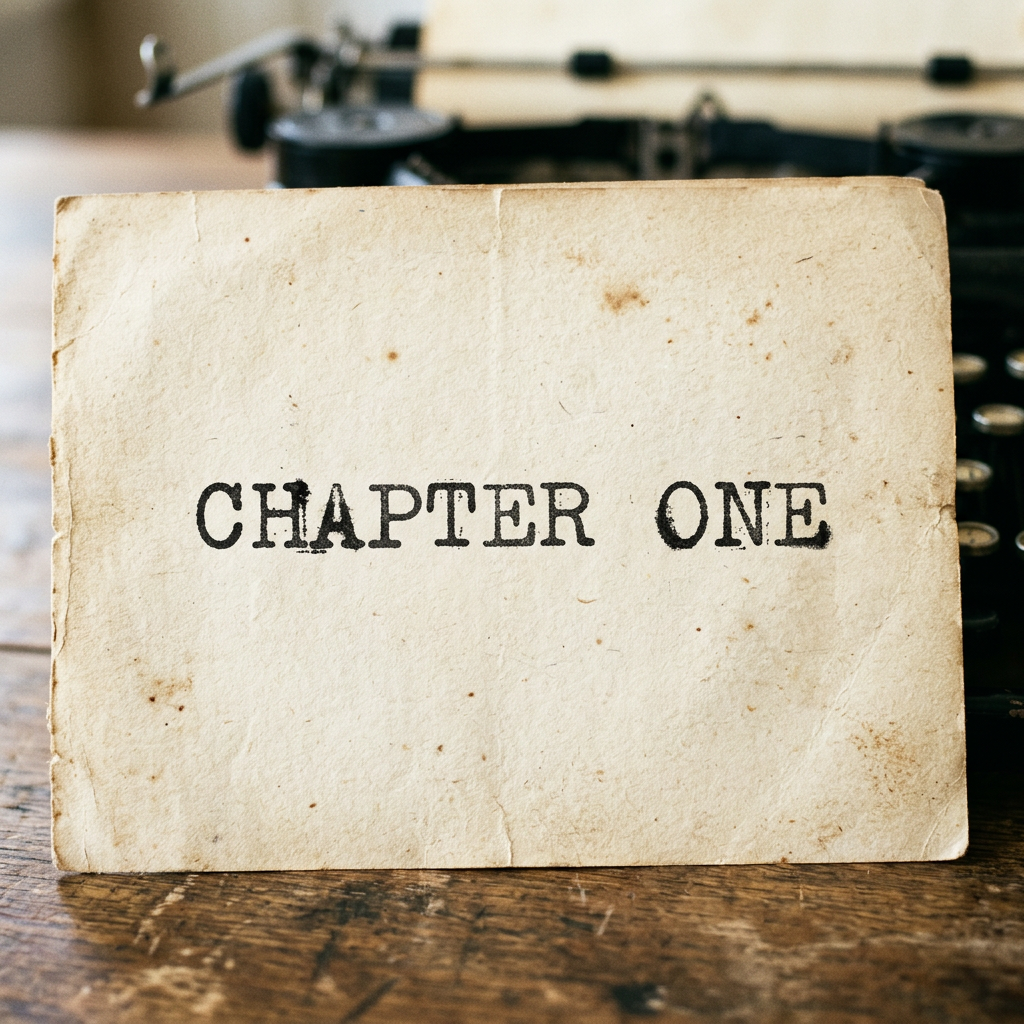

Text Rendering › Typography Style › Easyfal-ai/nano-banana-2

Prompt: The word 'CHAPTER ONE' typed on aged paper with a vintage typewriter font, complete with slightly uneven ink

V1 Leaderboard

192 prompts, 6 categories, graded pass/fail by VLM judges.

| # | Model | Pass Rate | Pass / Fail | Avg Latency |

|---|---|---|---|---|

| 1 | fal-ai/nano-banana-2 | 94.8% | 182/10 | 28.1s |

| 2 | fal-ai/nano-banana-pro | 93.8% | 180/12 | 23.4s |

| 3 | bfl/flux-2-max | 91.7% | 176/16 | 26.7s |

| 4 | bfl/flux-2-pro | 82.3% | 158/34 | 11.8s |

| 5 | bfl/flux-2-klein-9b | 78.6% | 151/41 | 4.1s |

| 6 | bfl/flux-2-klein-4b | 74.0% | 142/50 | 3.8s |

What we evaluate

Each model is tested across 6 categories with 192 prompts spanning easy to extreme difficulty.

Start learning

Comprehensive guides on image generation evaluation — from metrics to methodology.

Browse guidesFrequently asked questions

See how every model performs

Compare models side-by-side with our interactive benchmark explorer.

Explore ImageBench V1